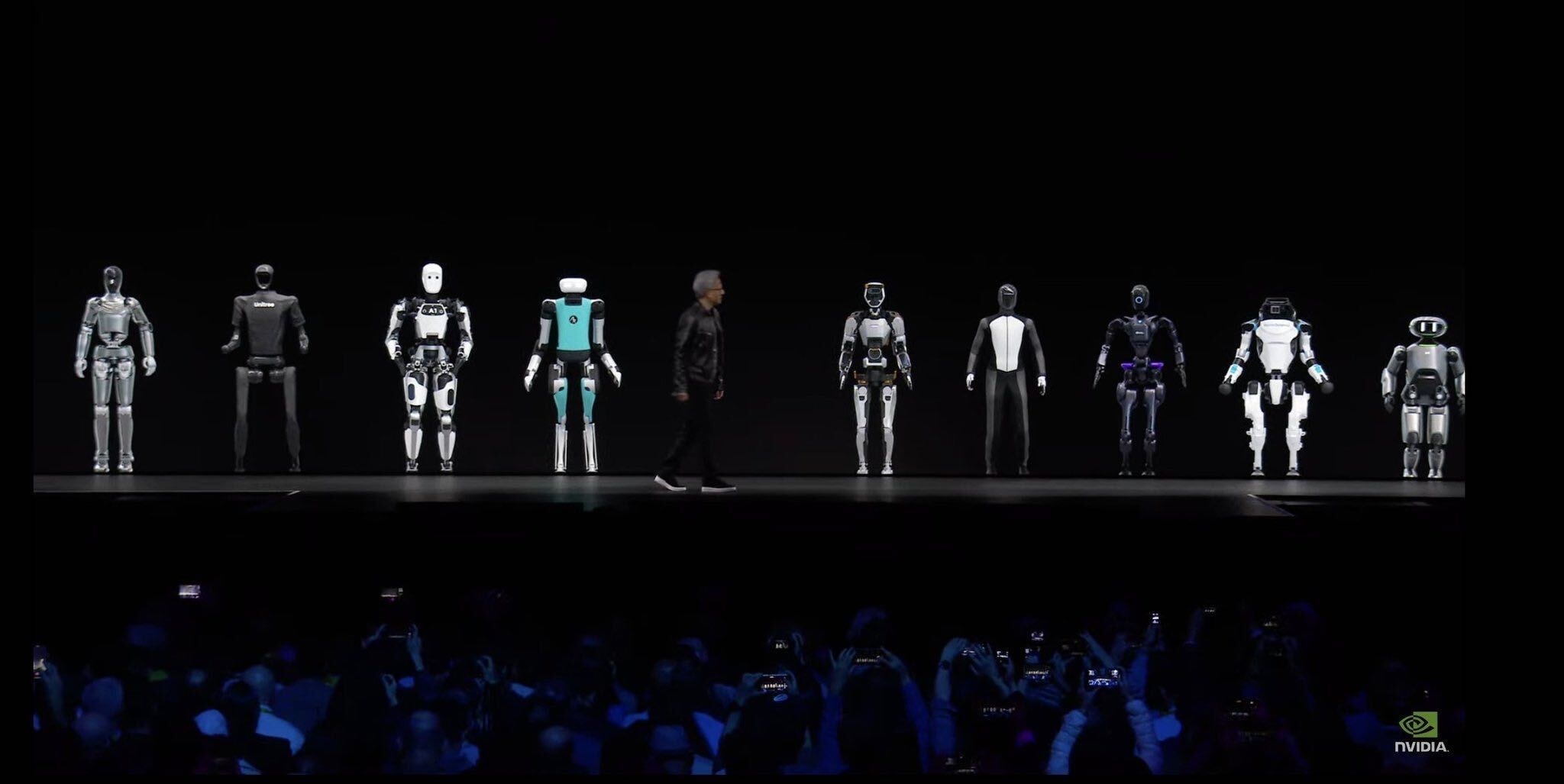

GTC 2024 Jensen Huang Keynote (March 18, 2024) — The moment that launched the era of Physical AI

GTC 2024 Jensen Huang Keynote (March 18, 2024) — The moment that launched the era of Physical AI

Physical AI: Why the Sudden Attention?

In 2024, after NVIDIA’s Jensen Huang declared “Physical AI” at GTC, this keyword became a central topic in the AI industry.

Just as ChatGPT transformed the world of knowledge work, there’s growing expectation that AI will now extend into the physical world. A future where robots fold laundry, organize logistics, and make coffee. Many people feel that future is getting closer.

This knowledge base is a guide to understanding Physical AI.

Introduction Guide: Read in Order

If you want to systematically understand Physical AI, read the documents below in order.

1. Defining Physical AI

What is Physical AI? The origin of the term and scope definition

2. From Specialist to Generalist

Past robots were Specialists. Physical AI aims for Generalists.

3. What are RFM & VLA?

The core technology for implementing Generalist robots: VLA (Vision-Language-Action) models

4. The Action Data Scaling Problem

Why VLA can’t easily succeed like LLMs: Data

Explore Further

After completing the introduction guide, feel free to explore topics that interest you.

Insight Essays

- VLA & RFM Progress - Ongoing development of VLA and RFM

- Teleoperation - Collecting data by controlling robots

- Non-Teleop Data - Other data collection methods

- Simulation & World Model - Using virtual environments

- Humanoid Design - Why humanoids?

- Tactile Sensing - Vision alone isn’t enough

- Physical vs Cognitive AI - Two aspects of intelligence

- Community Approaches - The open source ecosystem

Models, Companies, Hardware

Explore Models, Companies, Hardware, and People from the left sidebar, or use the Graph Index.

External Resources

- LeRobot - HuggingFace - Open source robot learning framework

- Open X-Embodiment - Cross-robot dataset

- NVIDIA Isaac - Robot simulation platform