What is Scaling Law?

In the LLM field, Scaling Law refers to the principle that increasing model size, data volume, and compute leads to predictable performance improvements.

| Factor | Description |

|---|---|

| Model Size | More parameters → Better performance |

| Data Volume | More training data → Better performance |

| Compute | More training compute → Better performance |

This law justified investment in large-scale models like GPT-3 and GPT-4. If the same formula works for robotics, companies would have motivation to invest in large-scale data collection and training.

Robotics Scaling Law: Current Evidence

Generalist AI’s Claims

Generalist GEN-0 claims to have discovered robotics scaling laws with 270,000 hours of real physical interaction data.

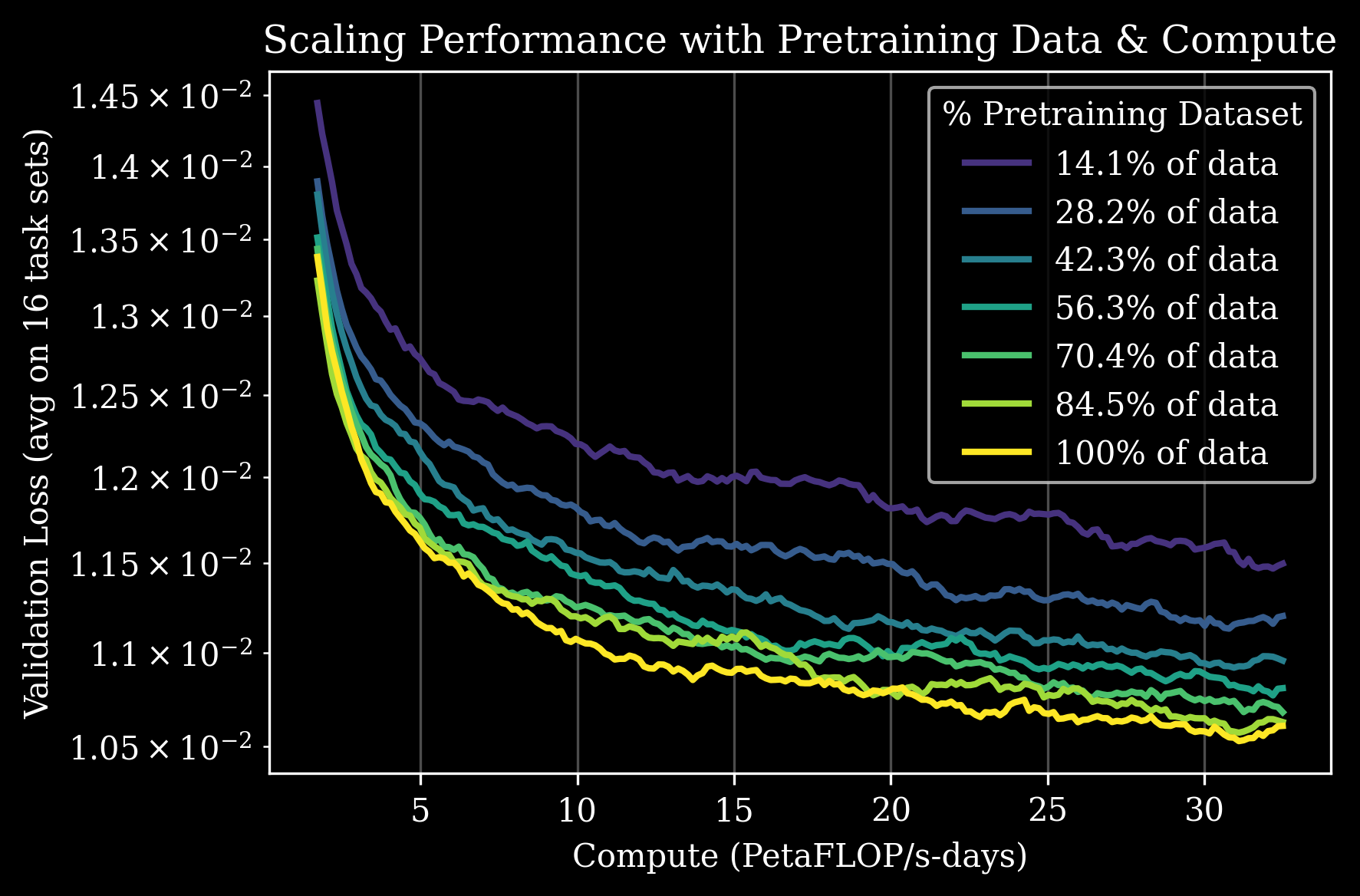

GEN-0 Scaling Law: Predictable performance improvement with data/compute increase (Source: Generalist AI)

Key Findings:

- Data ↑ → Performance ↑ (predictable improvement)

- Compute ↑ → Performance ↑ (consistent improvement)

- 7B Parameter Threshold: “Rigidity” at 1B, data internalization and continuous improvement at 7B+

| Parameters | Phenomenon |

|---|---|

| 1B | Fails to absorb complex data, learning stagnates |

| 7B+ | Data internalization, continuous improvement, adapts to new tasks |

Generalist AI claims this could be the “GPT-3 moment” for robotics.

NVIDIA GR00T’s Synthetic Data Experiment

GR00T N1 systematically validated the scaling effect of synthetic data.

| Data Type | Scale | Generation Time |

|---|---|---|

| Real teleoperation | 88 hours | - |

| Simulation trajectory | 780,000 | 11 hours |

| Neural trajectory | 300,000 | 1.5 days (3,600 GPUs) |

Key Results:

- +40% performance improvement with synthetic data (vs. real data only)

- 780K simulation trajectories = equivalent to 6,500 hours of human demos

- Neural trajectories add +5.8% additional improvement on average

Physical Intelligence π Series

π0 demonstrated the possibility of generalist policies by collecting 10,000+ hours of teleoperation data across 8 robot platforms.

Why is Robotics Scaling Difficult?

LLM vs Robotics Data

| Aspect | LLM | Robotics |

|---|---|---|

| Data Source | Internet (infinite) | Physical interaction (limited) |

| Collection Cost | Crawling (cheap) | Teleoperation (expensive) |

| Data Format | Text (uniform) | Various robots/sensors (heterogeneous) |

| Validation | Automatable | Requires physical verification |

Action Data Scaling Problem

As discussed in The Action Data Scaling Problem, collecting robot action data is inherently difficult:

- Physical Constraints: Robot must physically move

- Time Cost: 1 hour of data = 1+ hours of work

- Quality Control: Depends on human operator skill

- Safety Issues: Risk of hardware damage on failure

Solutions for Scaling

1. Synthetic Data

NVIDIA GR00T’s approach:

| Method | Description | Advantages |

|---|---|---|

| Simulation trajectory | Auto-generated in physics simulator | Mass generation, physical validity |

| Neural trajectory | Using video generation models | Diversity, rare scenarios |

780,000 trajectories in 11 hours = equivalent to 9 months of continuous human work

2. Cross-Embodiment Learning

Integrating data from various robots:

- Open X-Embodiment: 22 robot types, 1M+ episodes

- GR00T N1: Single model supports diverse platforms

- π0: Integrated learning across 8 robot platforms

3. Human Video Utilization

Learning from human behavior videos, not robot data:

- LAPA (GR00T N1): Extract latent actions from videos without action labels

- π0.5: Co-training with web videos

- Internet-scale video = potentially infinite data

4. Large-Scale Real Data Collection

Generalist AI’s approach:

- Diverse environments: homes, bakeries, laundromats, warehouses, factories

- 270,000 hours of pure robot data

- Focus on real physical interaction, not simulation

Data Scale Comparison

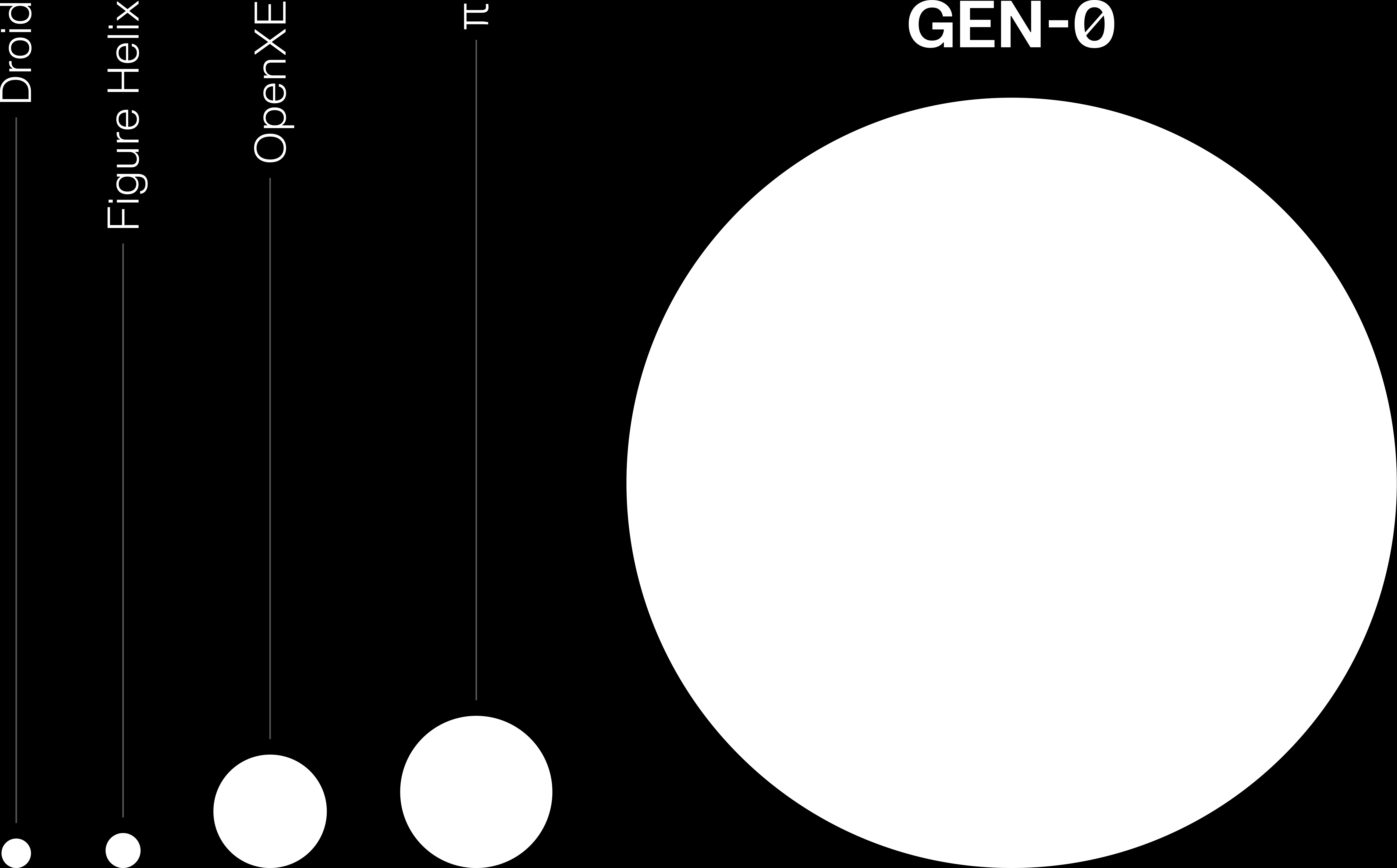

Data scale comparison of major VLA models (Source: Generalist AI)

| Model | Data Scale | Data Type |

|---|---|---|

| Generalist GEN-0 | 270,000 hours | Real robot |

| π0 | 10,000+ hours | Teleoperation |

| GR00T N1 | 88 hours + 780K synthetic | Real + Synthetic |

| Sunday ACT-1 | 10M+ episodes | Gloves (human motion) |

Conclusion: The Possibility of Scaling Laws

Positive Signals

- Generalist AI’s Discovery: Predictable performance improvement with data/compute increase

- Synthetic Data Effect: NVIDIA’s +40% performance improvement report

- 7B Threshold: Phase transition phenomenon similar to LLMs observed

Remaining Questions

- Verification Needed: Generalist AI’s claims lack external validation

- Data Quality vs Quantity: Is simply increasing quantity enough?

- Real vs Synthetic: Which data is more effective?

- Generalization Limits: Does scaling work for all tasks?

Whether robotics scaling laws work as powerfully as LLMs is still uncertain, but early evidence is encouraging. With continued large-scale investment and research, robotics may also have its “GPT moment.”

See Also

- The Action Data Scaling Problem - Fundamental difficulties of data collection

- Generalist GEN-0 - Claims of scaling law discovery

- GR00T N1 - Synthetic data effect validation

- π0 - Large-scale teleoperation data learning

- Teleoperation Approach - Data collection methods